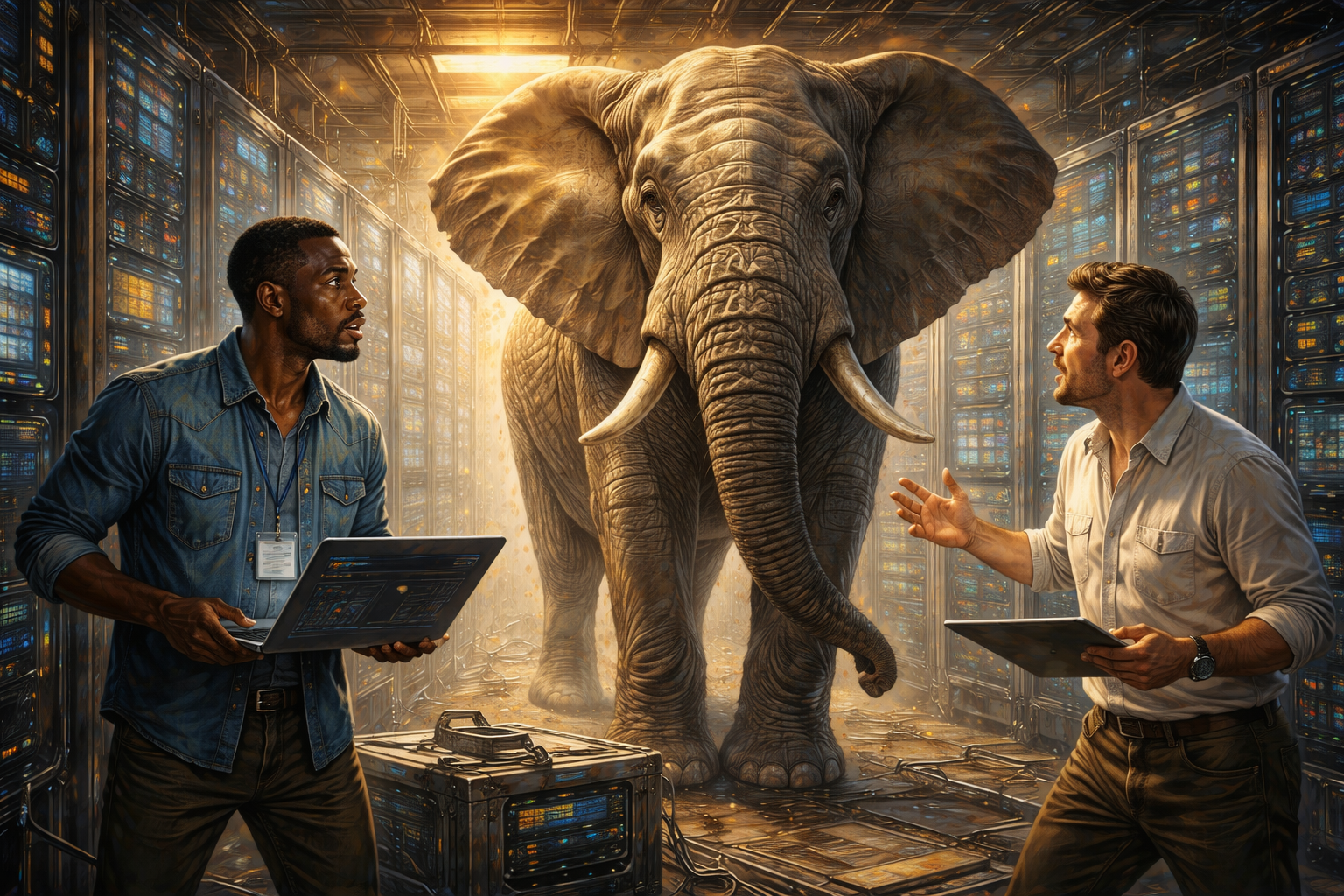

Introduction: The Elephant in the Server Room

Picture a teenager who eats everything in the fridge, drinks all the milk, and then complains they are still hungry. That is what training massive AI models feels like for researchers. These systems are powerful, but they are also greedy. They swallow data, demand electricity by the megawatt, and strain hardware like few technologies before them.

The result is breathtaking breakthroughs paired with eye-watering bills. Efficiency is not a side quest here. It is the main challenge. If these models are going to scale responsibly, they have to get cheaper, faster, and less wasteful.

Behind every slick demo you see on social media sits an army of engineers sweating over hardware bottlenecks, latency spikes, and compute costs. This is the elephant in the server room: the fact that artificial intelligence may collapse under its own weight if it doesn’t learn to slim down.

The Speed Problem

Imagine asking a question and waiting thirty seconds for an answer. In the world of AI, that feels like forever. Latency, the time between request and response, is one of the biggest frustrations. A trader in finance can’t wait for a delayed output when millions hang in the balance. A gamer doesn’t tolerate lag when an AI-driven opponent takes too long to react.

The problem stems from the size of these models. The larger they get, the more math they need to churn through. It’s like asking a genius to solve every problem by reciting the encyclopedia first. Engineers are experimenting with tricks to cut response times. They prune unnecessary parts of the model, compress layers, or redesign architectures to run smoother.

Some use specialized hardware like GPUs and TPUs to handle the load. Still, every improvement feels like a tug-of-war between speed and accuracy. The story of AI’s future will partly be told by how well we shrink those wait times. Because in a world addicted to instant gratification, even a five-second pause feels like an eternity.

Energy, the Hidden Price Tag

Few people think about the electricity behind a chatbot reply, but the numbers are staggering. Training one large model can consume as much energy as hundreds of households use in a year. A startup founder once joked that his company’s biggest expense wasn’t payroll but the power bill. Jokes aside, this is serious business.

Data centers run hot, sucking up electricity not only for the compute itself but for the cooling systems that stop servers from frying. The environmental impact is real, and so is the financial strain. Efficiency here means finding ways to do more with less. Some labs are testing algorithms that need fewer passes over the same data. Others are placing data centers near renewable energy sources or even underwater to take advantage of natural cooling.

Every watt saved is money kept in the bank and carbon kept out of the sky. The irony is that AI is often touted as a tool for sustainability, yet its own appetite can undermine that goal. The challenge is making sure the machines designed to solve problems aren’t creating bigger ones behind the curtain.

Hardware Headaches

Think of AI models as race cars. They go fast, but only if the track and the engine are built to handle the speed. Right now, hardware is struggling to keep up with the demands of ever-growing models. Traditional CPUs choke on the workload, which is why GPUs became the standard. Then TPUs and other custom chips entered the scene, promising better efficiency.

But even those are not magic bullets. They are expensive, hard to manufacture, and often in short supply. Stories of companies waiting months to secure enough GPUs are common. Some even joke that graphics cards are the new oil. Hardware bottlenecks mean innovation is sometimes slowed not by ideas but by supply chains.

The scramble for chips has created a global race, with governments investing heavily in semiconductor production to secure their share of the pie. Without breakthroughs in hardware, even the smartest optimizations will hit a ceiling. It’s a reminder that behind every sleek software demo lies a warehouse full of humming, overheating machines that need constant care just to keep the show running.

Data Pipelines, the Unsung Hero

It’s easy to obsess over model size and hardware, but none of it matters if the data pipeline is broken. Picture a kitchen where world-class chefs stand ready, but the delivery truck keeps showing up late with missing ingredients. That’s what bad pipelines feel like. Models starve without data fed in the right way at the right speed.

Engineers spend huge amounts of time cleaning, organizing, and streaming data so the model can learn efficiently. A famous example came from a company that realized 80 percent of its training costs were wasted on garbage data. They were essentially teaching a machine to master nonsense. Optimizing pipelines is about quality as much as speed.

Feeding cleaner data reduces the strain on compute and cuts training time dramatically. Companies now treat pipeline design as seriously as model architecture. After all, even the most brilliant algorithm collapses when it’s built on a foundation of noise. It may not grab headlines, but a strong data pipeline is the difference between a flashy demo and a reliable system.

The Scaling Dilemma

A researcher once quipped that the easiest way to improve an AI model is to make it bigger. Add more parameters, feed it more data, and watch the performance jump. That recipe worked for years, but it is now running into walls. Bigger is not always better when costs balloon and infrastructure buckles.

Scaling up means renting more cloud servers, buying more chips, and paying more engineers to wrangle the complexity. For a handful of tech giants, this is still possible. For smaller players, it’s like trying to compete in a race where the entry fee alone bankrupts you. The industry is at a crossroads.

Do we keep chasing scale at any cost, or do we pivot to smarter, leaner models that can deliver nearly the same performance with a fraction of the resources? Some startups are betting on the latter, showing that lightweight models trained cleverly can punch above their weight. The choice between brawn and brains will shape the future of AI development.

Tricks of the Trade

One story often told in research circles is about a team that trimmed their model’s size by half without losing accuracy. They used a method called pruning, which is basically cutting out unnecessary neurons the way a gardener trims dead branches. Another group used quantization, turning high-precision numbers into smaller, more efficient ones, saving space without sacrificing too much performance.

These methods sound technical, but the principle is simple: don’t waste resources on what doesn’t matter. It’s like a student highlighting every sentence in a textbook. At first, it feels thorough. In reality, it makes the book impossible to study. Smarter optimization is about focusing attention where it counts.

These tricks may not make headlines like shiny new models, but they are what keep costs from spiraling and systems from overheating. Behind every efficient AI sits a team quietly asking, “What can we throw out without breaking the magic?”

Money Talks

Efficiency is not just an engineering puzzle. It’s an economic one. Cloud bills for training large models can soar into the millions. Startups burn through funding just to keep experiments running. Even big companies grumble at the price tag.

One venture capitalist joked that investing in AI startups was really investing in cloud providers, since that’s where most of the money ends up. Efficiency becomes a survival strategy. A company that figures out how to train a model at half the cost suddenly has a competitive edge. It can experiment more, iterate faster, and offer cheaper products. In some ways, the race for better infrastructure is really a race for better margins.

The models may get the spotlight, but the accountants are the ones sweating in the background. If AI is going to be sustainable as an industry, it cannot bleed money forever. Efficiency is not just a technical detail. It is the difference between a research project and a viable business.

Environmental Pressure

As AI adoption grows, so does the spotlight on its carbon footprint. A journalist once compared training a single large model to flying a passenger plane across the world hundreds of times. That image stuck because it forced people to see the hidden cost of every clever output. Companies now face pressure from activists, regulators, and customers to prove they are not wrecking the planet in the name of progress.

This has sparked a wave of green AI initiatives. Data centers are being relocated to regions with abundant renewable energy. Algorithms are being tuned to reduce unnecessary computation. Researchers are publishing energy usage metrics alongside accuracy results to keep themselves honest.

Whether this is enough remains to be seen. The truth is, people are less impressed by magical technology if it comes with a climate bill. Efficiency here is not just about saving money. It’s about saving face in a world that increasingly ties reputation to responsibility.

The Future of Infrastructure

Looking ahead, the conversation about infrastructure is shifting. Instead of one giant model ruling them all, we may see swarms of smaller, specialized models working together. This approach mimics real life. A carpenter doesn’t use one tool for every task. They reach for the hammer, the saw, or the chisel as needed.

Similarly, distributed systems could reduce the strain on hardware while still delivering high performance. Edge computing adds another twist, pushing smaller models closer to the devices that need them. Imagine your phone handling tasks locally instead of pinging a faraway server. That reduces latency, cuts bandwidth, and saves energy.

These ideas are still being tested, but they hint at a future where efficiency is baked into the design from the ground up. It won’t be about one colossal model guzzling resources. It will be about networks of leaner, smarter systems cooperating in ways that feel almost invisible to the user.

Conclusion: Leaner, Smarter, Better

The story of efficiency in AI is not glamorous, but it is crucial. Without it, the technology risks collapsing under its own hunger for data, energy, and money. The heroes of this story are not the flashy demos but the quiet optimizations: the trimmed networks, the cleaned pipelines, the smarter chips.

They are what make it possible for AI to move from lab experiments to everyday tools. As users, we may never see the tangled wires, the humming servers, or the anxious engineers watching power meters spike. But we will feel the impact when models respond faster, cost less, and tread lighter on the planet.

Efficiency is not about limiting ambition. It is about making sure ambition can last. If AI is going to be more than a passing fad, it has to learn the oldest lesson in human history: how to do more with less. The future belongs not to the biggest, but to the smartest.

If this made you pause, that pause matters.

Progress—whether in ethics, automation, or AI—doesn’t happen by accident. It happens when we step back, question assumptions, and design with intention. Every choice, workflow, and line of code reflects what we value most. Take what stood out, sit with it, and notice how it shapes your next action or conversation. That’s where meaningful innovation begins.

Canty